Video2X - Enhancing Video Quality With Deep Learning

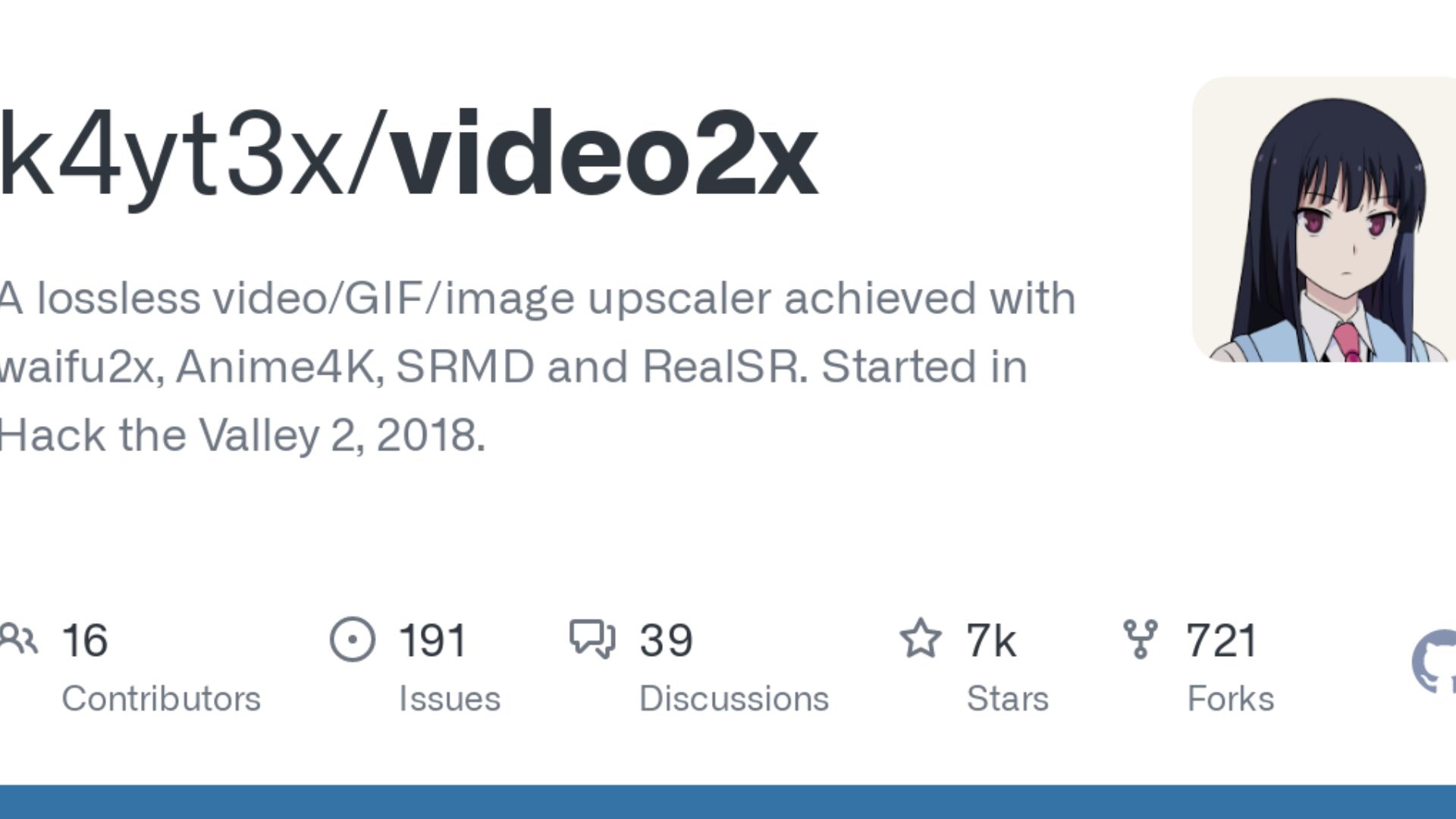

Video2X is an open-source project available on GitHub, developed by a talented developer named k4yt3x. It utilizes state-of-the-art deep learning techniques to improve the visual quality of videos, including upscaling low-resolution videos and increasing their frame rate.

Author:James PierceReviewer:Elisa MuellerMay 29, 20232.5K Shares114.1K Views

In recent years, advancements in deep learning have revolutionized the field of computer vision, enabling researchers to tackle challenging tasks such as image recognition, object detection, and video processing. One such breakthrough in video processing is Video2X, a powerful tool that leverages deep learning algorithms to enhance the quality of videos.

Video2X is an open-source project available on GitHub, developed by a talented developer named k4yt3x. It utilizes state-of-the-art deep learning techniques to improve the visual quality of videos, including upscaling low-resolution videos and increasing their frame rate. The tool has gained popularity among enthusiasts and professionals alike due to its impressive results and ease of use.

Key Features Of Video2X

Video2X offers several key features that make it a powerful tool for video enhancement:

Video Upscaling

One of the primary functions of Video2X is to upscale low-resolution videos to higher resolutions. By employing advanced deep learning models, Video2X can intelligently infer missing details and produce visually sharper and more detailed output. This feature is particularly useful when dealing with old or low-quality video footage.

Frame Rate Enhancement

Video2X also excels in enhancing the frame rate of videos. By generating intermediate frames through deep learning algorithms, the tool can effectively increase the smoothness of video playback. This feature is especially valuable for converting videos captured at lower frame rates, such as vintage films, into more fluid and enjoyable viewing experiences.

Real-Time Processing

Video2X incorporates optimization techniques that enable real-time video processing. This capability allows users to enhance video quality on-the-fly, making it suitable for applications such as live streaming, video conferencing, and gaming.

User-Friendly Interface

Despite its complex underlying technology, Video2X strives to provide a user-friendly experience. It offers a straightforward command-line interface, making it accessible to both novice users and experienced developers. The project's GitHub repository provides detailed documentation and examples to assist users in getting started with Video2X.

The Technology Behind Video2X

Video2X leverages the power of deep learning, a subfield of artificial intelligence, to achieve its impressive video enhancement capabilities. At its core, Video2X utilizes convolutional neural networks (CNNs) and generative adversarial networks (GANs) to learn and replicate the patterns present in high-quality videos.

Convolutional Neural Networks

CNNs are a type of deep neural network specifically designed to process visual data such as images and videos. These networks consist of multiple layers of interconnected nodes, called neurons, which perform convolutional operations on input data. By stacking multiple convolutional layers, CNNs can extract hierarchical features and learn complex representations of visual patterns.

Generative Adversarial Networks

GANs are a class of deep learning models that consist of two components: a generator and a discriminator. The generator aims to produce realistic output samples, while the discriminator's task is to distinguish between real and generated samples. Through an adversarial training process, the generator learns to generate increasingly realistic samples, fooling the discriminator in the process.

Video2X utilizes a combination of CNNs and GANs to enhance video quality. The generator network learns to generate high-resolution frames or interpolate intermediate frames, while the discriminator network helps guide the generator toward producing more authentic-looking results.

Applications Of Video2X

Video2X has a wide range of applications across various domains. Here are some of them:

Digital Restoration Of Historical Footage

Historical footage captured on older cameras or in low resolution can often suffer from degradation and loss of visual quality. Video2X can be employed to restore these videos, bringing them closer to their original quality. By upscaling the resolution and enhancing the frame rate, Video2X helps preserve and breathe new life into valuable historical content.

Improving Video Streaming Quality

Video streaming platforms are constantly striving to deliver high-quality content to their users. Video2X can be integrated into video streaming pipelines to enhance the visual quality of the streamed videos. By upscaling the resolution and improving the frame rate, Video2X ensures that viewers can enjoy a more immersive and visually appealing streaming experience.

Enhancing Video Conferencing

With the rise of remote work and online meetings, video conferencing has become an integral part of our daily lives. However, poor video quality can hinder effective communication. Video2X can be utilized to enhance the quality of video streams during video conferencing, resulting in clearer, sharper, and more professional-looking visuals.

Gaming And Virtual Reality (VR)

Video2X can play a significant role in the gaming and VR industry. By improving the resolution and frame rate of game visuals or VR content, Video2X enhances the overall gaming experience, making it more immersive and realistic. This technology can also be employed in retro gaming to upscale older games and provide a modernized visual experience to gamers.

Pre-Production Visualizations

During the pre-production phase of film and video production, creators often work with low-quality or rough footage to visualize their ideas. Video2X can be utilized to enhance the quality of these pre-production visualizations, providing a clearer representation of the intended final product. This can aid in decision-making and facilitate better planning and execution of the production process.

How to Upscale your videos up to 8K I 3 easy steps Tutorial

Limitations And Future Development

While Video2X offers impressive video enhancement capabilities, it's essential to acknowledge its limitations and potential areas for future development. Some of these considerations include:

Processing Time And Resource Requirements

Video2X's deep learning algorithms can be computationally intensive, requiring significant processing power and time, especially for large video files. Future optimization techniques and hardware advancements may help mitigate these challenges and improve the overall efficiency of the tool.

Artifact Generation

In certain scenarios, the video enhancement process may introduce artifacts or unwanted visual distortions. Researchers are actively working on refining the algorithms to minimize these artifacts and produce more visually pleasing results.

Training On Diverse Datasets

The performance of Video2X heavily depends on the quality and diversity of the training datasets. Continued efforts to train the models on diverse video datasets could lead to better generalization and improved enhancement results across a wider range of video content.

Customization And Fine-Tuning

While Video2X offers impressive out-of-the-box results, providing users with options for customization and fine-tuning the enhancement process could further enhance its versatility and cater to specific use cases and preferences.

Video2X V/S Traditional Video Upscaling Methods

Video upscaling is a process of increasing the resolution of low-resolution videos to match or approach the quality of high-resolution videos. Traditionally, various methods have been employed for video upscaling, but they often result in limited improvement and potential loss of quality.

Video2X, on the other hand, employs deep learning techniques, specifically Convolutional Neural Networks (CNNs), to enhance video quality. Let's compare Video2X with traditional video upscaling methods to understand the advantages it offers.

Traditional Video Upscaling Methods

Traditional video upscaling methods usually rely on interpolation techniques, such as bilinear or bicubic interpolation, to increase the resolution of videos. These methods essentially fill in the missing pixels by averaging neighboring pixels.

While they can provide a basic level of upscaling, they often result in blurry and less detailed images. The interpolation techniques fail to capture the complex patterns and structures present in high-resolution videos, resulting in limited improvement in visual quality.

Video2X - Deep Learning-Based Video Upscaling

Video2X takes a fundamentally different approach by leveraging deep learning algorithms, specifically CNNs, to enhance video quality.

By training on large datasets of high-resolution and low-resolution video pairs, Video2X's CNN learns to understand the intricate patterns and structures in high-quality videos. When faced with a low-resolution video, the trained CNN can intelligently infer missing details and generate visually sharper and more detailed frames.

Compared to traditional methods, Video2X offers significant advantages. The deep learning approach allows Video2X to capture complex relationships within videos, resulting in superior upscaling performance.

The generated frames exhibit more clarity, sharpness, and preserved details, making the upscaled videos visually appealing and closer to the quality of high-resolution videos.

Another advantage of Video2X is its ability to enhance the frame rate of videos. By generating intermediate frames, it can improve the smoothness of video playback, making the upscaled videos more enjoyable and realistic.

People Also Ask

What File Formats Does Video2X Support For Input And Output?

Video2X supports a variety of popular video file formats, including MP4, AVI, MKV, and more.

Is Video2X Suitable For Enhancing Live Streaming Video Quality?

Yes, Video2X's real-time processing capabilities make it suitable for enhancing the video quality of live-streaming applications.

Can Video2X Be Used For Upscaling Videos Captured In Different Aspect Ratios?

Yes, Video2X can handle videos with different aspect ratios and intelligently adjust the resolution and enhance the quality accordingly.

Does Video2X Require An Internet Connection To Work?

No, Video2X is a standalone tool that can be used offline without an internet connection.

Are There Any Known Limitations Or Artifacts When Using Video2X?

While Video2X strives to produce high-quality results, there may be instances where artifacts or visual distortions are introduced during the enhancement process. Ongoing research and development are aimed at minimizing these limitations.

Conclusion

Video2X is an innovative tool that harnesses the power of deep learning to enhance video quality. With its video upscaling and frame rate enhancement capabilities, Video2X offers numerous applications in various domains, including digital restoration, video streaming, video conferencing, gaming, and pre-production visualization.

While Video2X has its limitations, ongoing research and development hold promising prospects for further improving its performance and expanding its capabilities. As deep learning continues to advance, tools like Video2X pave the way for a future where video content can be enhanced and enjoyed at unprecedented levels of quality and realism.

James Pierce

Author

Elisa Mueller

Reviewer

Latest Articles

Popular Articles